Digital Image Foundations

How computers perceive images

2026-03-13

1. Digital Image Foundations

How computers store images

How We See: Light and The Human Eye

- 1. Illumination: Light from a source (e.g., the sun) hits an object.

- 2. Reflection: The object absorbs some colors and reflects others.

- 3. Capture: Reflected light enters the eye through the pupil.

- 4. Processing: The lens focuses light onto the retina. Photoreceptors (rods and cones) convert it to electrical signals for the brain.

How Computers “See”: The Digital Camera

- 1. Capture: Just like the eye, the camera captures reflected light.

- 2. Lens & Aperture: Light enters through an opening (aperture) and is focused by glass lenses.

- 3. The Sensor: Instead of a retina, light hits a digital sensor (CMOS/CCD).

- 4. Digitization: Millions of sensor pixels convert incoming photons into an electrical charge, which is translated into a digital matrix.

The Digital Sensor: Capturing Light

- Photodiodes: Each pixel on a sensor forms a tiny bucket collecting photons (light).

- Charge: More light \(\rightarrow\) more photons \(\rightarrow\) higher electrical charge.

- Bayer Filter: Sensors capture only light intensity (grayscale). To get color, a microscopic mosaic filter is placed over them so each pixel records only Red, Green, or Blue.

- Digitization: An Analog-to-Digital Converter (ADC) turns the electrical charge into a discrete number, typically from

0to255.

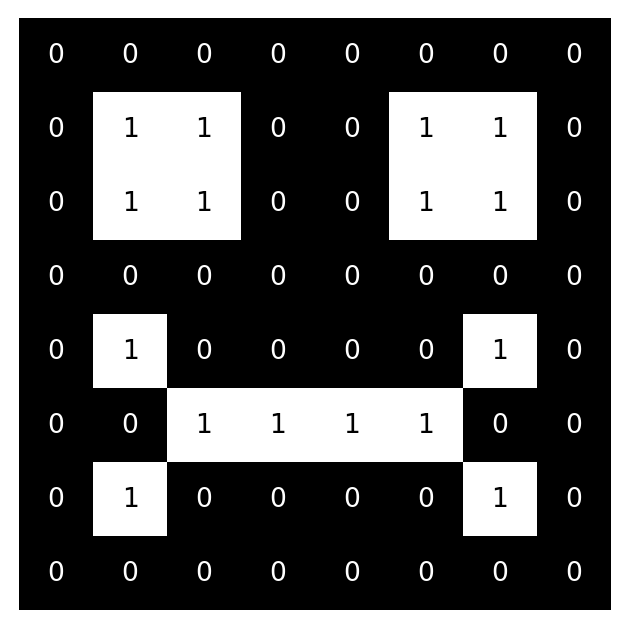

Grayscale Images: Pixels & Coordinates

- Images are 2D matrices of numbers. The origin

(0,0)is top-left. - Example: an 8x8 matrix where

0is black and1is white.

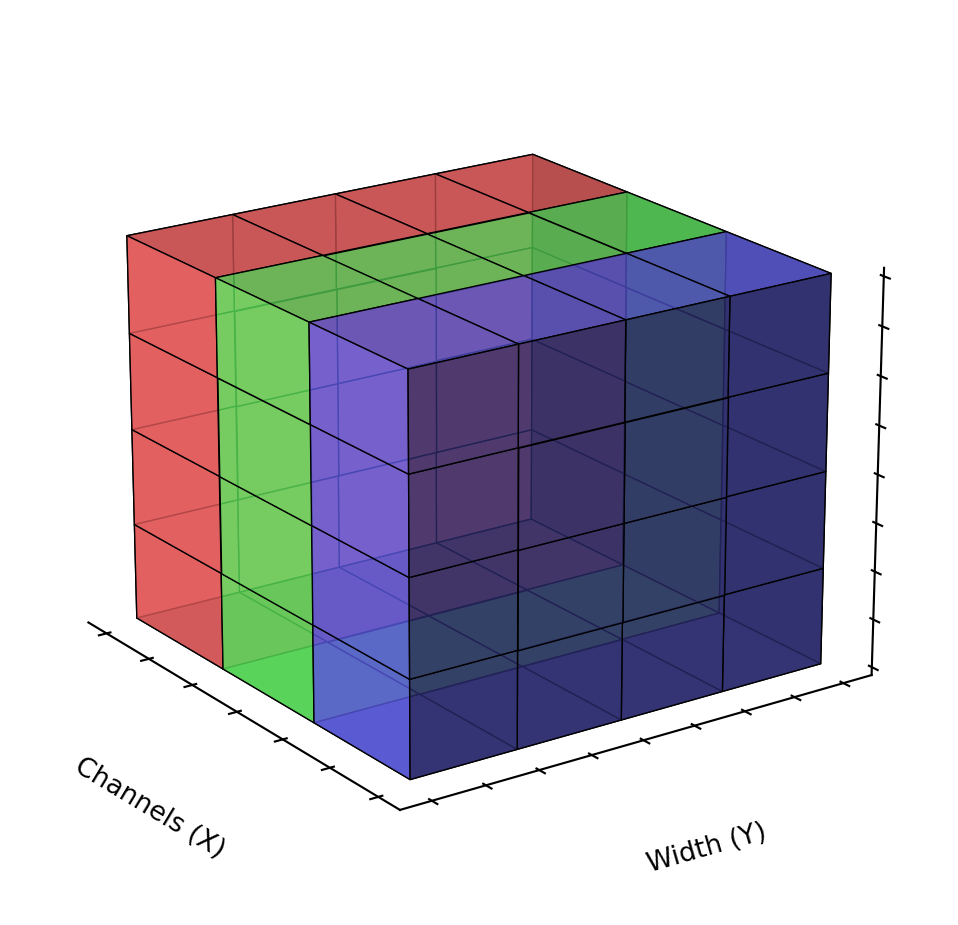

Color Images: The RGB Channels

- Images are 3D matrices (height × width × 3 channels).

- RGB: Red, Green, Blue. Values range from

0(dark) to255(bright).

Essential Image Libraries

- OpenCV: Very fast C++ library mapped to Python. Uses BGR channel order by default. Great for video streams and low-level transforms.

- Pillow (PIL): Standard Python image library. Uses RGB. Easy to use for simple drawing and resizing.

- NumPy: The core math library. Images in Python are fundamentally

numpymulti-dimensional arrays (tensors).

Image Data Types: uint8 vs Floats

- Pixels are usually 8-bit unsigned integers (

uint8), meaning values range from0to255. 0is Black.255is White (in grayscale) or full intensity (in RGB).- Deep Learning: Models like YOLO often prefer normalized inputs. We convert

0-255to floats between0.0and1.0by dividing by255.0.

Coordinates vs. Array Indexing

(The “Gotcha”)

- We think in geometry:

(x, y)=(width, height). - NumPy thinks in matrices:

matrix[row, col]=matrix[y, x]. - This causes endless confusion when cropping with bounding boxes

(x1, y1, x2, y2).

The “Smurf Effect”: BGR vs. RGB

- OpenCV historically uses Blue-Green-Red (BGR).

- Most other libraries (Matplotlib, Pillow) expect Red-Green-Blue (RGB).

- If you read an image with

cv2.imread()and plot it directly in matplotlib, Red and Blue channels are swapped! - Faces look blue, skies look red.

Image Resolution and Resizing

- Resolution: Dimensions of the image

(Width × Height). - Deep Learning models typically require fixed input sizes (e.g.,

640x640). - Aspect Ratio: The ratio of width to height.

- Direct Resizing: Squashes or stretches the image, changing the aspect ratio (causes distortion).

- Letterboxing: Resizes while maintaining aspect ratio, then pads the remaining area with a solid color.

Conclusion

Wrapping up Image Foundations

Summary

- Light & Cameras: How digital sensors capture light and turn it into data.

- Pixels & Channels: Grayscale (2D) vs. Color RGB (3D) representations.

- Libraries: OpenCV, Pillow, and NumPy basics.

- Gotchas: BGR vs RGB (The Smurf Effect) and Array Indexing vs Coordinates.

Next Steps

Next up: YOLO Inference

- Using these images with state-of-the-art Computer Vision models.

- Running inference directly out-of-the-box.

Q&A

Thank You!

Any questions?